Deploying a PyTorch model with Triton Inference Server in 5 minutes | by Zabir Al Nazi Nabil | Medium

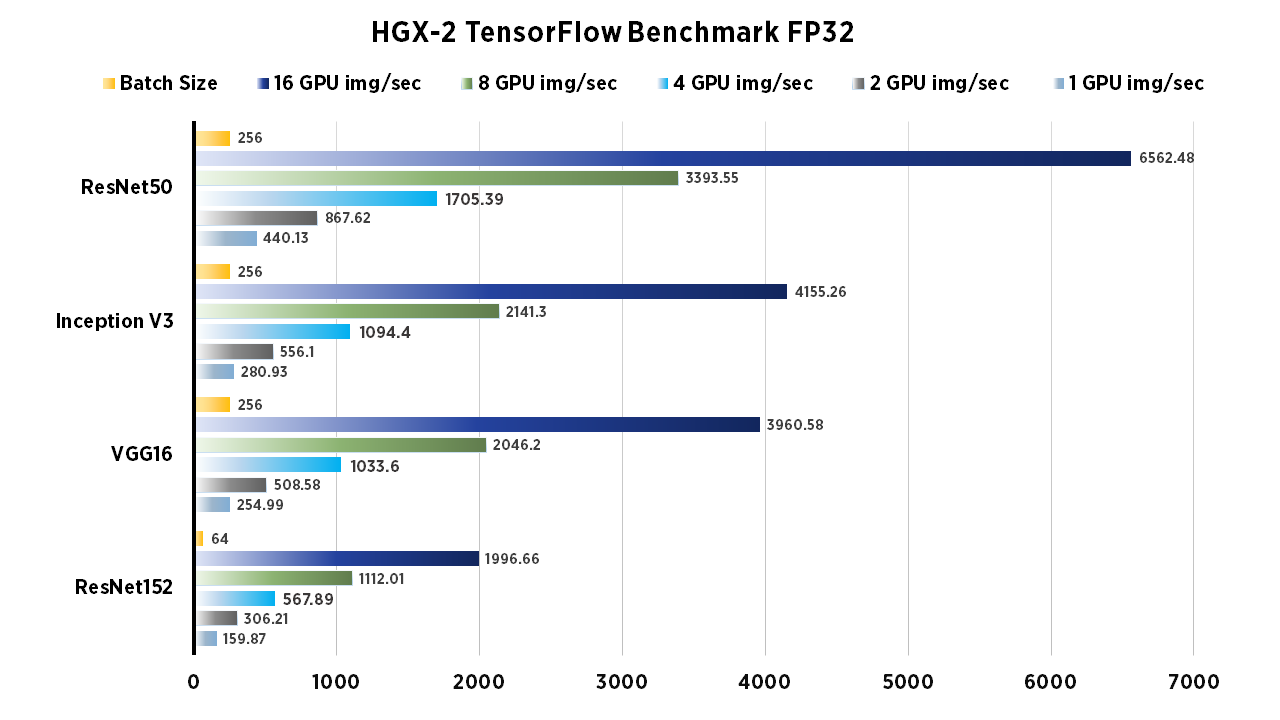

TensorFlow Performance with 1-4 GPUs -- RTX Titan, 2080Ti, 2080, 2070, GTX 1660Ti, 1070, 1080Ti, and Titan V | Puget Systems

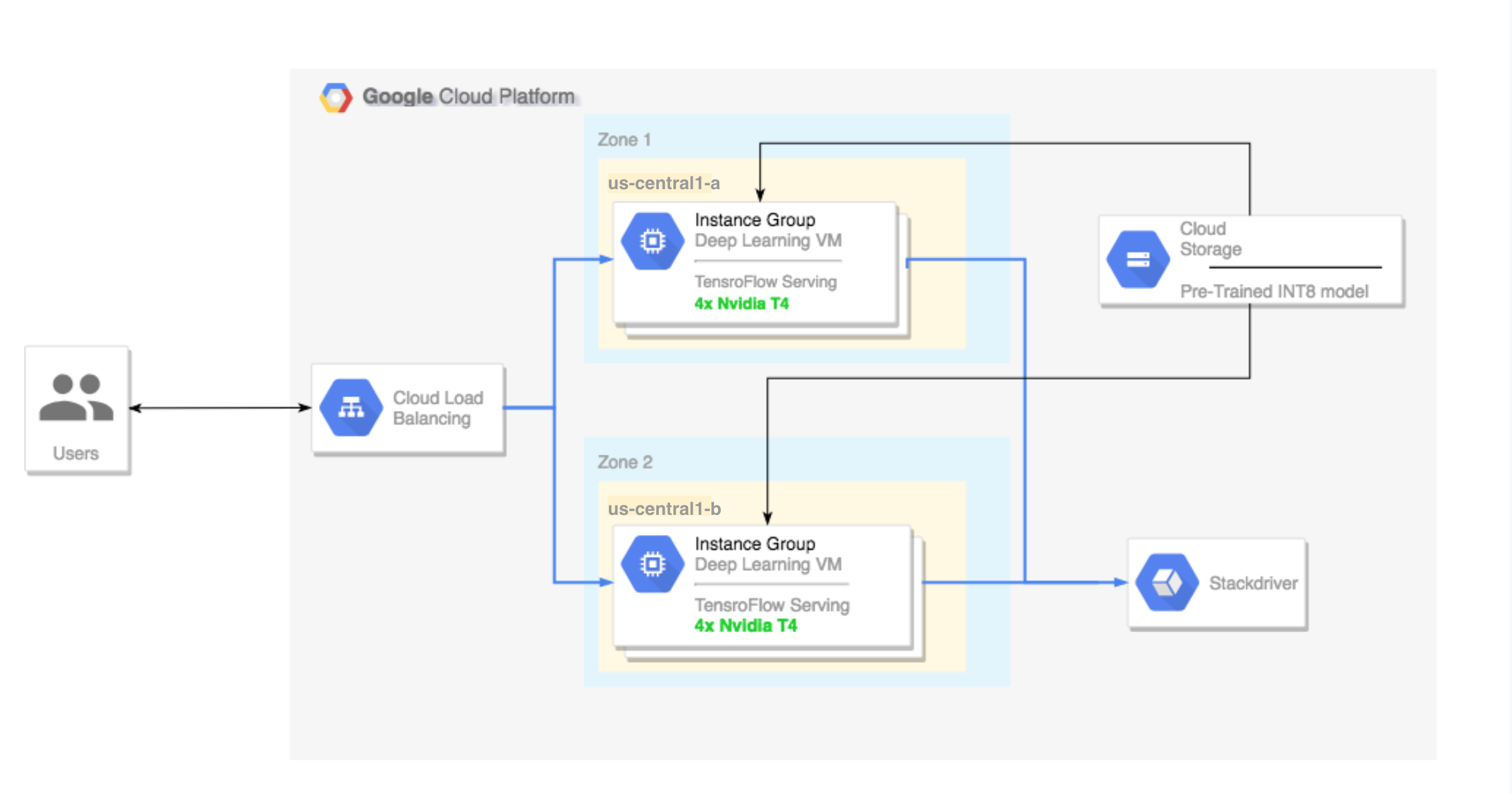

Running TensorFlow inference workloads with TensorRT5 and NVIDIA T4 GPU | Compute Engine Documentation | Google Cloud

GitHub - ai4reason/enigma-gpu-server: Tensorflow GPU server for fast evaluation with ENIGMA E Prover